Archive for February 2012

Selling Ice to Eskimos

Facebook has recently discovered that beyond the uncanny valley of personalized marketing lies the bottomless pit of invasive identity misappropriation.

But there is a deeper problem here. I’ve said it before and I will say it again. Facebook has the data, but they do not have users with shopping intent. Nobody goes to Facebook to buy stuff. Facebook is for meeting friends, like a bar or a club.

Even with the best products in the world and the most detailed private information it is not easy to sell stuff to strangers in bars; unless you’re selling beer.

Big Data is Big

We happen to have one sat in the next building over. Would you guys like to see it?

Oh, boy! Would we!

Myself and about twenty other Oracle employees are attending a Cloudera training on Hadoop in the Oracle Reading office. Five days packed with information covering a whole new ecosystem filled with some pretty crazy beasts.

Our heads are spinning like a room full of network-attached storage and our pens are humming like a data center cooling system as we attempt to map and reduce every little piece of data they throw at us.

During one of the breaks, we get the opportunity to go see the Oracle Big Data Appliance. Standing in front of this enormous machine, it finally dawns on me what a massive bulk of raw power this really is. A seemingly countless number of disks are mounted in a box higher and wider than myself. Each disk can hold three terabytes of data.

Facet Based Predictions in Oracle Real-Time Decisions

[ Crossposting from the Oracle Real-Time Decisions Blog. ]

The analytical models method detailed in a previous post are not only extremely valuable for reporting, but can also be used to predict likelihoods for things other than regular choices. We can for instance generate predictions based on statistics for an attribute of a choice, rather than the choice itself. We use the term facet based prediction to describe this advanced form of generating predictions.

This novel approach to modeling can be applied to significantly improve predictive accuracy and model quality. It can also facilitate the rapid transfer of existing learnings to newly created choices based on their facet values. These capabilities can be of use to practically all implementations, but they are of utmost importance in cases where the number of choices is very high or individual choices have short shelf life. In these instances, there might simply not be enough time or data to be able to predict likelihoods for individual choices. We could predict likelihoods for certain facets of our choices; as long as their cardinality remains relatively low.

Consider the following example in which we recommend products based on the acceptance of other products in the same category. In our ILS, Oracle Real-Time Decisions will be used to recommend a single product based on a single performance goal: Likelihood.

Choice Groups Setup

Products that may be recommended are stored in a choice group Products (we will use static choices, but this approach could be implemented for dynamic choices also). Product choices have an attribute Category which will contain a category name. We will use a second and separate dynamic choice group Categories to record acceptance of the different product categories.

Note that we never intend to return any choices from the Categories choice group to a client. It is configured using a dummy source and will not contain any actual choices. This group is only used within the ILS for predicting likelihoods. Statistics for this group may however be viewed in decision center reports.

Recording Events

Similar to the example for analytical models, we will record events against a dynamically generated choice representing a facet value rather than against the actual choice. In this example, both the actual choice and the event to record will be passed through a request represented as Strings.

// create a new choice to represent the category facet

CategoriesChoice c = new CategoriesChoice(Categories.getPrototype());

// set properties of the choice (SDOId should be of the form "{ChoiceGroupId}${ChoiceLabel}")

c.setSDOId("Category" + "$" + Products.getChoice(request.getChoice()).getCategory());

// record event in model (catching an exception just in case)

try { c.recordEvent(request.getEvent()); } catch (Exception e) { logError("Exception: " + e); }

Model Setup

Our model setup is practically identical to before, but this time we’ll enable “Use for prediction“.

Predicting Likelihoods

// get instance of the model used for predicting Category Events

CategoryEvents m = CategoryEvents.getInstance();

// return the likelihood based on the generated SDOId and the "Accepted" event

return m.getChoiceEventLikelihood("Categories$"+product.getCategory(), event );

Choice Group Scores Setup

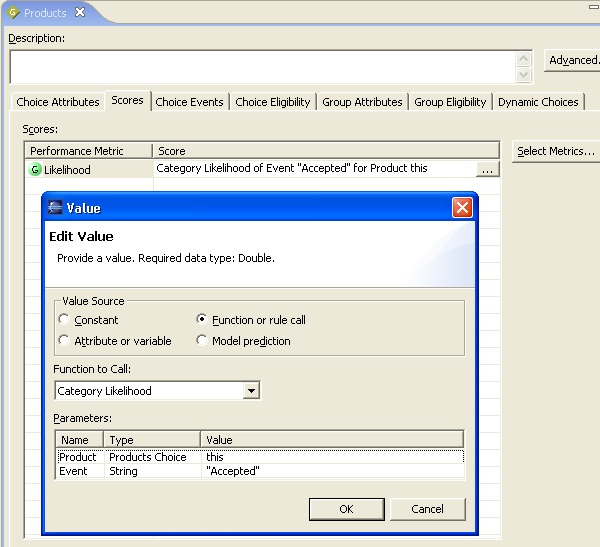

On the scores tab for the Products choice group we configure the Likelihood performance goal to be populated by thePredictLikelihood function using parameters this and “Accepted”. The keyword this refers to the particular choice being scored and will ensure each choice is scored according to its category facet.

That is all that is required to score choices against a facet. We can now create decisions and advisors that use these predictions to recommend products based on their categories.

In this example, we have predicted likelihoods based on a single product facet. As a result, products in the same category will be scored the same. In practical implementations this will rarely be an issue, because there will presumably be multiple performance goals. Also, likelihoods may be mixed with product specific attributes like price or cost; resulting in score differentiation between products regardless of equality in likelihoods.

In a later post, we will discuss how we can expand on this to include multiple product facets in our likelihood prediction.

Marketing Personalization and the Uncanny Valley

Dear [prospect.first_name],

Following our last discussion on [prospect.last_contact_date] concerning [prospect.subject_area] I think the following article would be of particular interest to you.

Seth Godin writes.

Sure, it’s easy to grab a first name from a database or glean some info from a profile.

But when you pretend to know me, you’ve already started our relationship with a lie. You’ve cheapened the tools we use to recognize each other and you’ve tricked me, at least a little.

Increased familiarity begets heightened expectations. Personalization has its own uncanny valley.

The uncanny valley is a hypothesis in the field of robotics and 3D computer animation, which holds that when human replicas look and act almost, but not perfectly, like actual human beings, it causes a response of revulsion among human observers.

When you treat your customers as though you know them personally they will be personally offended if you do not. Beware of the eerie hollow of broken promise.